Deep Reinforcement Learning for Cyber Threat Modelling

Skills: Reinforcement learning (RL), deep learning, Neural network design and implementation, ICS security, Systems control.

This project is the subject of the IEEE journal transaction:

A. S. Mohamed and D. Kundur, "On the Use of Reinforcement Learning for Attacking and Defending Load Frequency Control," in IEEE Transactions on Smart Grid

Problem: Recent cyber incidents have raised alarming concerns about the threat of cyberattacks targeting our critical infrastructure, including hospitals, electricity grids, gas pipelines, and communication networks providing telecom and internet services. These attacks pose significant risks to society. Notable examples include a cyberattack on Ukraine's electric grid in 2015, which resulted in a blackout affecting 250,000 people; a cyberattack on an Iranian nuclear enrichment facility reported to have damaged one-fifth of the facility's centrifuges; and the discovery of the Triton malware at a Saudi Arabian petrochemical plant, capable of disabling safety instrumented systems and causing a plant disaster.

Challenge: The primary challenge in countering these cyberattacks is the lack of awareness regarding their capabilities. We remain unaware of vulnerabilities, tactics employed by cyberattackers, and how these vulnerabilities can be exploited to harm our critical infrastructure.

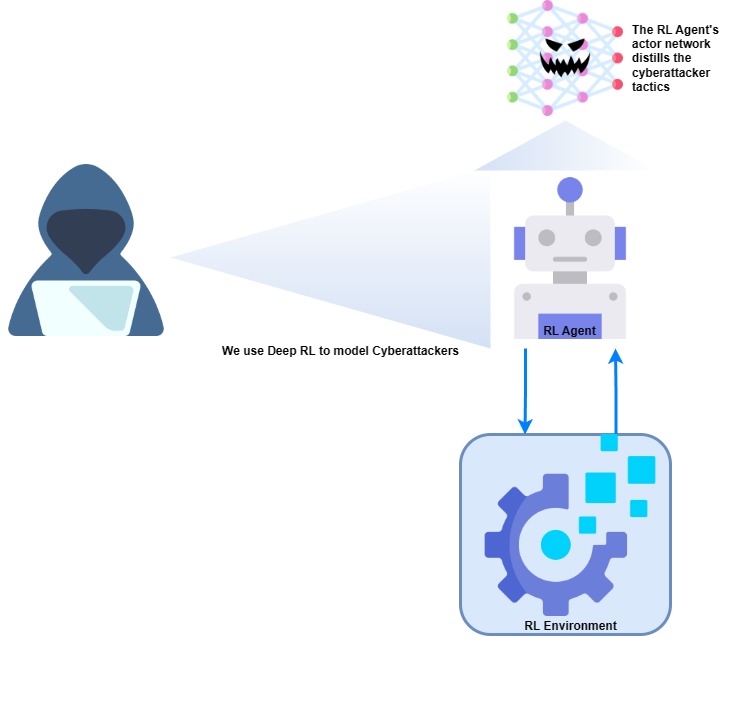

Method: We leverage Reinforcement Learning (RL) to create intelligent agents capable of simulating cyberattackers, providing proactive insights into potential vulnerabilities, resource sought for executing attacks, and potential tactics for exploiting vulnerabilites. Alarmingly, the actor network of a trained deep RL agent can be easily adapted into a malicious program capable of launching attacks against our systems.

Approach: This research involved the development of an RL environment simulating the dynamics of an industrial control system within a critical infrastructure system, specifically an electric grid. Subsequently, I designed, trained, and fine-tuned a Deep Deterministic Policy Gradient (DDPG) RL agent with a deep neural network backbone. The formulation of reward functions and the RL agent's game aimed to encourage learning of a malicious policy, simulating potential attacker behaviors that could harm the critical system.

Refer to our journal paper for details regarding the implementation.

Results:Below, several animations demonstrate the devastating impact of an attack policy learnt by the RL agent. The animations illustrate an attack against an electric grid. The electric grid includes four power generators, at each quadrant, supplying power to four cities. The cities' lights are animated with white dots behind the generators. The 'breathing' orbs inside the generators show their health (frequency). The generators are forced out of operation when these orbs grow out of bounds.